June 8, 2018

From the Front Page of HPCwire:

(https://www.hpcwire.com/2018/06/04/simulating-quantum-computers-with-data-vortex/)

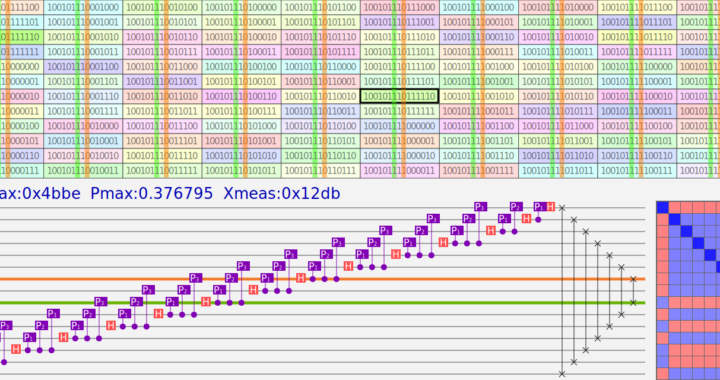

Data Vortex Technologies is tackling one of the greatest challenges in high performance computing: simulation of quantum computers. The main difficulty is that the amount of memory and processing power needed to simulate a general quantum algorithm grows exponentially with the number of quantum bits (qubits). During a simulation, it is necessary to store and repeatedly process 2qubits complex floating point numbers in a distributed system, and depending on the problem this ranges from terabytes to petabytes of data and beyond. For this reason, the largest number of qubits that can be simulated in any large classical computer is around 50.

What makes the problem even more demanding is that it is also necessary to communicate all this data between the compute nodes and this has to be done repeatedly and sufficiently fast. Here is where Data Vortex comes into play: part of our research focus on investigating techniques is to take advantage of this novel network to achieve this goal. Currently we are simulating quantum programs in the DV206 system HYPATIA, a small 64-node testbed powered with off-the-shelf Intel processors which is capable of running quantum programs up to 40 qubits. Our programs are fully scalable and can simulate more qubits with larger machines.